WHITE SUPREMACY AND THE NAZIFICATION OF AMERICA

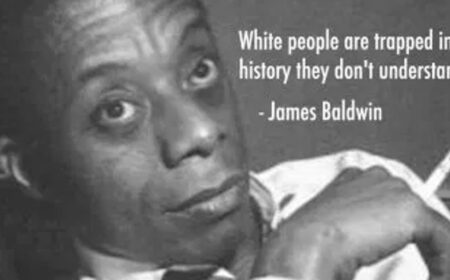

“To be a Negro in this country and to be relatively conscious is to be in a rage almost all the time. ”

― James Baldwin

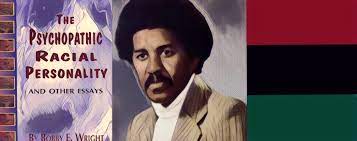

Some historians argue NAZISM is not a foreign idea in American politics. Some even say that the roots of German Nazism come from America. If these things are true, what does it mean for Black people in America?

Podcast: Play in new window | Download (Duration: 2:01:06 — 55.4MB) | Embed